Like many early-stage startups, being stretched thin for resources is commonplace. We recently hired a VP of Sales to execute our go-to-market strategy. However, we quickly realized sourcing new leads was a bottleneck without dedicated SDR resources.

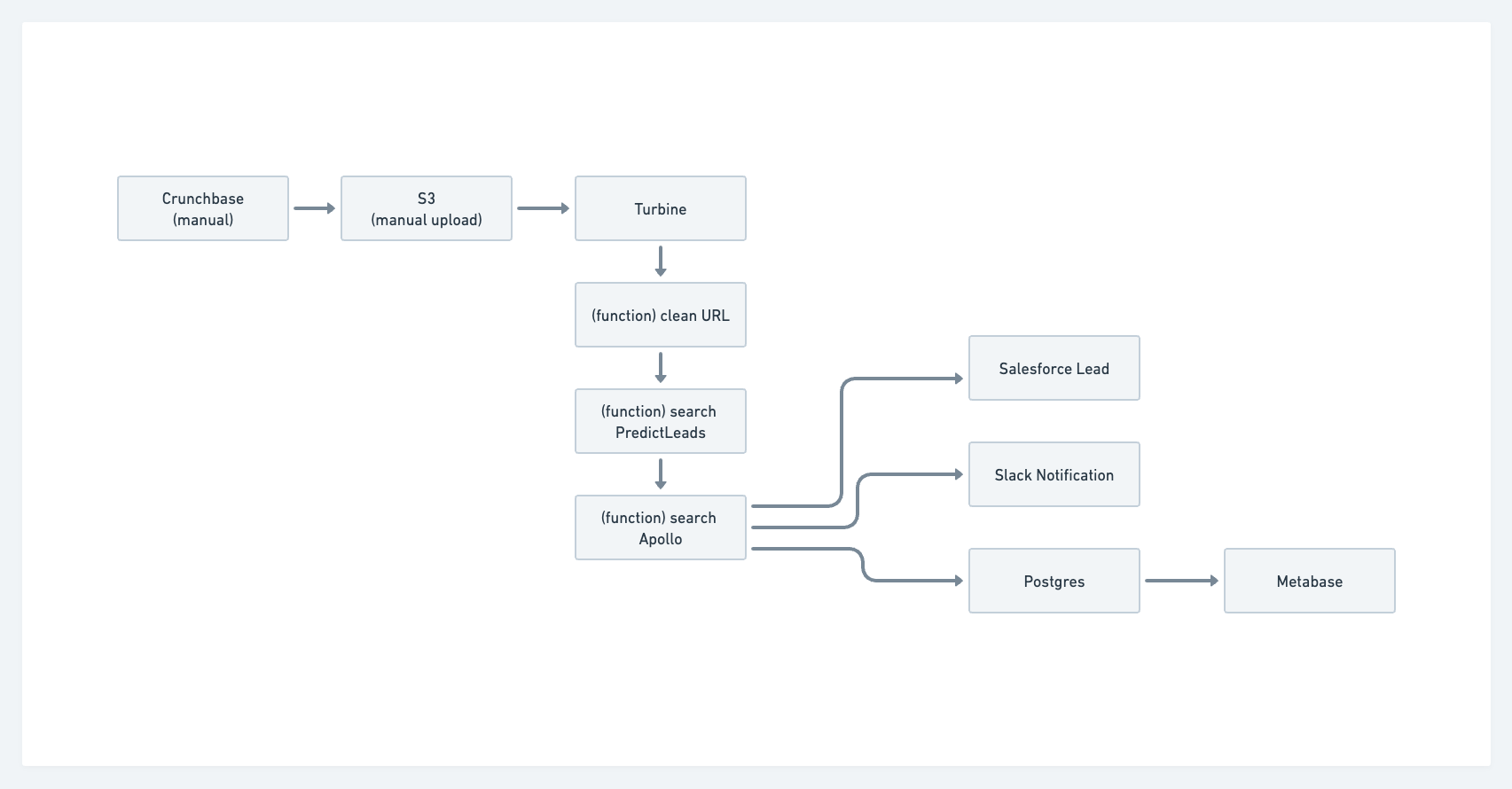

After speaking with Jamie, I realized parts of the lead generation process could be automated with a data application that wouldn’t require us to use a combination of expensive SaaS platforms. We would need to develop a way to query and search companies with specific criteria, find contact information for our ideal customer profile at the company, and send them a message. We came up with the following workflow:

The sales team can query Crunchbase and export a CSV. This could be automated via their API, but it would require us to sign a pricey Enterprise agreement. The engineering team built a S3 uploader for the sales team to upload the exported CSVs to an AWS S3 bucket so the Meroxa Turbine data app can take over. Once we have the company URL, we can query external APIs for enrichment before orchestrating the data into Salesforce. Once that is complete we can send it to Slack to notify the sales team of a new lead has been created and then send it onto Postgres for additional analysis with SQL.

Show Me the Code!

Turbine Data App Requirements

- Node JS

- Meroxa account

- Meroxa CLI

- Meroxa supported PostgreSQL DB

- AWS S3 bucket

- Crunchbase account (Paid)

- PredictLeads account (Paid)

- Apollo (Paid)

- Salesforce account (Paid)

- Slack account

Adding S3 and Postgres Resources to the Data Catalog with the Meroxa CLI

The first step in creating a data app is to add the S3 and PostgreSQL resources to the Meroxa catalog. Resources can be added via the dashboard, but we’re going to show you how to add them to the catalog via the CLI.

Adding S3 (docs)

$ meroxa resource create datalake \\

--type s3 \\

--url "s3://$AWS_ACCESS_KEY:$AWS_ACCESS_SECRET@$AWS_REGION/$AWS_S3_BUCKET"Adding Postgres (docs)

$ meroxa resource create pg_db \\

--type postgres \\

--url postgres://$PG_USER:$PG_PASS@$PG_URL:$PG_PORT/$PG_DB \\

--metadata '{"logical_replication":"true"}'If your database supports logical replication, set the metadata configuration value to true.

Initializing a Turbine JavaScript Data App

$ meroxa apps init prospector --lang jsWhen you initialize the Turbine app, you’ll see we include many comments and boilerplate to help you get up and going. We’ll remove most of this for this example, but take a look around and even execute meroxa apps run to see the output of our sample app.

Cleaning the CSV data from Crunchbase

In Crunchbase, we can do searches like find all private, active companies that have raised a series A in the last year. It returns a table that looks like the following:

When we export the table to CSV, the website URL format is https://www.[incident.io](<http://incident.io>)/. To search PredictLeads, our URL needs to be incident.io according to their docs. We need to write private functions in our Turbine app that remove the protocol (http:// or https://), remove the www, and remove the trailing slash. No need to set up an orchestration system (Airflow, Dagster, Prefect) or complex stream processing platform (Spark, Flink, et al) in order to accomplish this. We can transform the URL with plain old Javascript as seen below.

function cleanURL(companyUrl) {

let noProtocol = removeHttp(companyUrl);

let noWWW = removeWWW(noProtocol);

let noSlash = removeSlash(noWWW);

return noSlash;

}

// Remove protocol, www, and trailing slash from URL

function removeHttp(url) {

return url.replace(/^https?:\\/\\//, "");

}

function removeWWW(noProtocol) {

return noProtocol.replace(/^www\\./, "");

}

function removeSlash(noWWW) {

return noWWW.replace(/\\/$/, "");

}Searching Job Descriptions with PredictLeads

The PredictLeads API allows us to search a company’s job descriptions. In our case, if a company is hiring data-specific roles (e.g. Data Engineering, Analytics Engineering, etc…), they could potentially be a Meroxa customer. We send the cleanURL to another private function searchJobTitles that returns an object containing the companyUrl and jobTitle.

async function makePLRequest(companyUrl) {

const searchTitle = "Data";

try {

const response = await axios.get(

`https://predictleads.com/api/v2/companies/${companyUrl}/job_openings`,

{

headers: {

"X-User-Email": process.env.PL_EMAIL,

"X-User-Token": process.env.PL_TOKEN,

},

}

);

if (response.status == 200) {

response.data.data.forEach((job) => {

const jobTitle = job.attributes.title;

const companyName = job.attributes.

if (jobTitle.search(searchTitle) > 0) {

console.log({ companyUrl, jobTitle });

}

});

}

} catch (error) {

console.error(error);

}

}Finding Contacts with Apollo

Next, we use the Apollo API to find a contact at our target company. Apollo’s API can search job postings, but for the sake of making things more complex to showcase the Meroxa platform we scoped down Apollo’s usage to find contacts. We pass in our companyUrl, to the findIcpAtCompany private function and return the contact information with

async function findIcpAtCompany(companyUrl) {

try {

const contactResults = await findContactByRole(jobTitle, companyUrl);

let icpInfo = {

name: contactResults.people[0].name,

linkedinUrl: contactResults.people[0].linkedin_url,

jobTitle: contactResults.people[0].title,

photo: contactResults.people[0].photo_url,

email: contactResults.people[0].email,

company: contactResults.organization.name,

website: contactResults.organization.website

}

return icpInfo;

} catch (error) {

console.error(error);

}

}

async function findContactByRole(jobTitle, companyUrl) {

try {

const response = await axios.post("https://api.apollo.io/v1/people/match",

{

headers: {

"Content-Type": "application/json",

"Cache-Control": "no-cache"

},

data: {

"api_key": process.env.APOLLO_API_KEY,

"q_organization_domains": companyUrl,

"person_titles": `[${jobTitle}']

}

}

);

} catch (error) {

console.error(error);

}

return response;

}Sending Leads to Salesforce

Now that we have all of our data we can send it to Salesforce via their API. While we do have a Salesforce connector available via Conduit, I wanted to showcase Turbine’s ability to leverage both the Meroxa platform and regular code for data movement. To send data into Salesforce, I will use the jsforce Node.js library.

const jsforce = require('jsforce');

async function sendToSalesforce(companyInfo) {

var conn = new jsforce.Connection({

instanceUrl : process.env.SFDC_URL,

accessToken : process.env.SFDC_ACCESS_TOKEN

});

try {

await conn.subject("Account").create(

{ Name : `$companyInfo.name` }, // add whatever fields you want here

function(err, ret) {

if (err || !ret.success) { return console.error(err, ret); }

console.log("Created record id : " + ret.id);

}

)

} catch (error) {

console.error(error);

}

}Notifying the Sales Team in Slack

Once a new lead is in Salesforce, we want to notify the sales team in their Slack channel so they can begin outreach. You’ll need to get a token from the Slack settings and in this case I’m using a bot user token so I can post as the Prospected app. If I want to format the message, I could include a blocks object

async function sendSlackNotification(companyInfo) {

const slackToken = process.env.SLACK_BOT_USER_TOKEN;

run().catch(err => console.log(err));

async function run() {

const url = 'https://slack.com/api/chat.postMessage';

const res = await axios.post(url, {

channel: '#sales',

icon_emoji: ':moneybag:',

username: 'Prospector',

text: `New Contact: ${companyInfo}`

}, { headers: { authorization: `Bearer ${slackToken}` }

});

console.log('Done', res.data);

}

}Completing the Turbine Data App

Now that we have all the functions completed, the last step is to wire this up to orchestrate the data. We added a PostgreSQL resource as seen below for future analysis or to power a more full-featured dashboard.

// Import statements

// Main app code

exports.App = class App {

digForGold(csvFiles) {

csvFiles.forEach((csv) => {

fs.createReadStream(csvLocation)

.pipe(csv({ headers: true, skipLines: 1 }))

.on("error", (error) => console.error(error))

.on("data", (data) => makeRequest(data))

.on("end", () => {

console.log("done");

});

}

async function makeRequest(data) {

const companyUrl = data["_10"];

const company = cleanURL(companyUrl);

const plResults = makePLRequest(companyUrl);

const contactInfo = findIcpAtCompany(companyUrl);

const sfdcResponse = sendToSalesforce(contactInfo);

const slackResponse = sendSlackNotifcation(contactInfo);

}

async run(turbine) {

let source = await turbine.resources("s3");

let destination = await turbine.resources("postgres");

let csvFiles = await source.records("s3BucketName");

let prospected = await turbine.process(csvFiles, this.digForGold)

let analytics = await destination.write(prospected, "salesLeads");

}

}Conclusion

This was one of the more complex use cases, but it helped exercise and showcase the power of Turbine. There’s so much power in leveraging plain code interspersed with the advantages Turbine provides. For obvious reasons, we aren’t open sourcing this app 😊 but if you have questions, please contact us via our Discord channel or at support@meroxa.com.

If you’d like to see more data app examples, please feel free to make your request in our Discord channel. Otherwise, Get started by requesting a free demo of Meroxa and build something cool. Your app could also be featured in our “Data App Spotlight” series.