Today, software engineers have a lot of tools to move data from one place to another. Conduit, our OSS data integration tool written in Go, includes an API that devs can use to programmatically build pipelines. Since Conduit ships as a tiny single binary, it functions as a powerful tool that allows you to efficiently move data from one place to another.

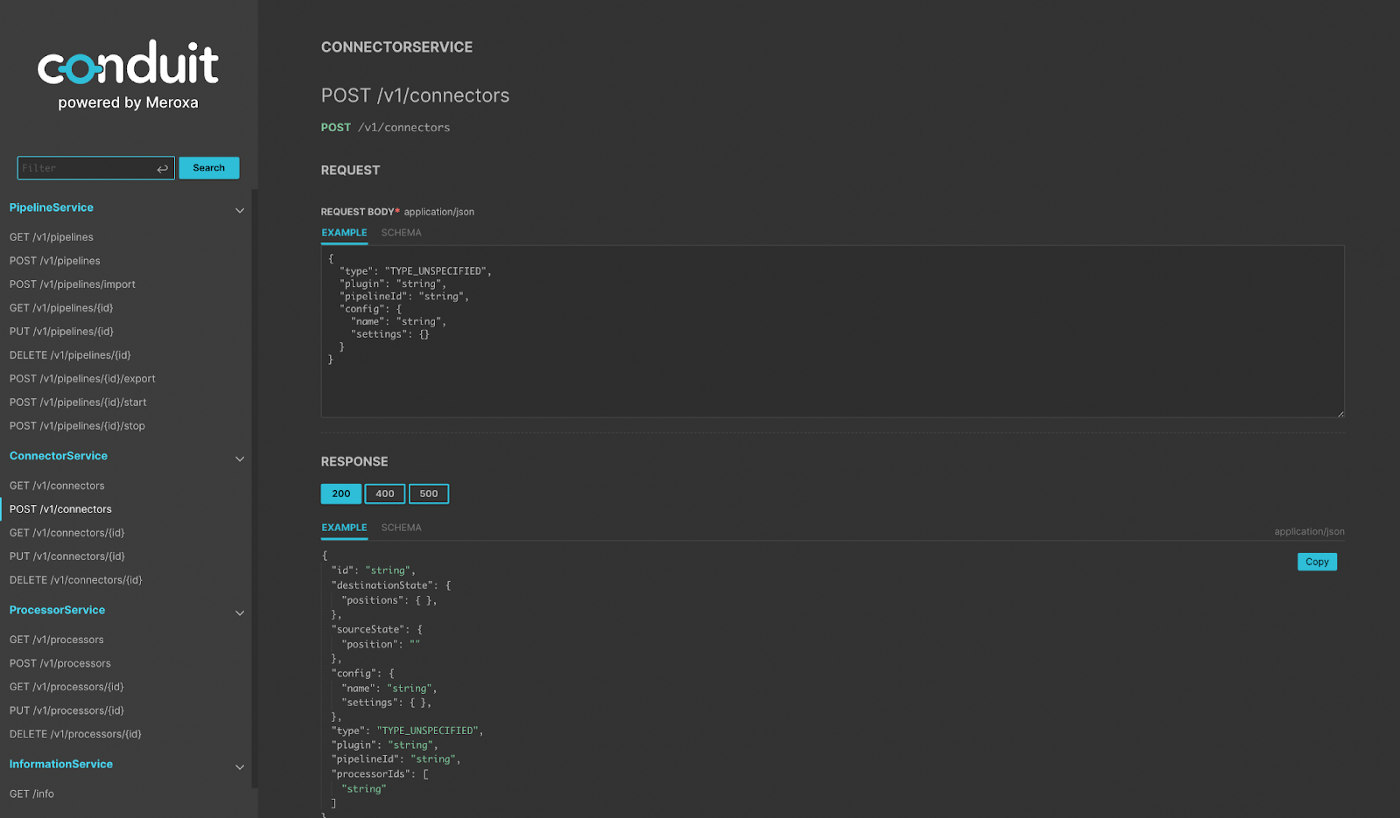

Today, Conduit provides aRESTful HTTP andgRPC Pipeline APIs that allow you to perform behaviors such as:

- Creating data pipelines

- Creating connectors (ex. PostgreSQL, Kafka, File, etc.)

- Starting/stopping pipelines

These APIs allow you to fully manage the lifecycle of a pipeline from creation to tear down. Even though Conduit provides both of these interfaces, in the rest of the guide, the examples and use case will focus on the HTTP APIs.

Why is this important?

Having access to an API is important when writing software that moves data around and allows us to think differently about writing data integration software. Here are the three advantages:

Abstraction — The software you write can focus on the task at hand rather than on the mechanics of moving data.

Automation — Your code can fully automate the pipeline lifecycle. You can build tools to orchestrate data movement. All you need is the Conduit binary and an HTTP library.

Language-Agnostic — You can interface with the HTTP server from any programming language.

Creating a Pipeline using Node.js

For example, let’s say you wanted to build a new tool that moves data from PostgreSQL to a file. This could be the case for performing a data backup or downloading data for analysis. In this case, the tool’s job is to move data from one place to another.

Now, there are many ways to approach this problem. But here, I’ll describe how we could approach this with Conduit. In this case, we can write a script that uses the HTTP API to:

- Create a new pipeline.

- Create a PostgreSQL connector to query data from PostgreSQL.

- Create a File Connector to store the result in a file.

- Run the Pipeline.

Note: Conduit does ship with a UI to give you an easy-to-use interface to build pipelines and is a great place to start. However, building the pipeline with code gives us the ability to review, commit, deploy this pipeline like the other critical components of our infrastructure. With Conduit, you can stop writing one-off scripts to move data.

From a high level, here are the tasks our code needs to perform. To begin writing this, we need to:

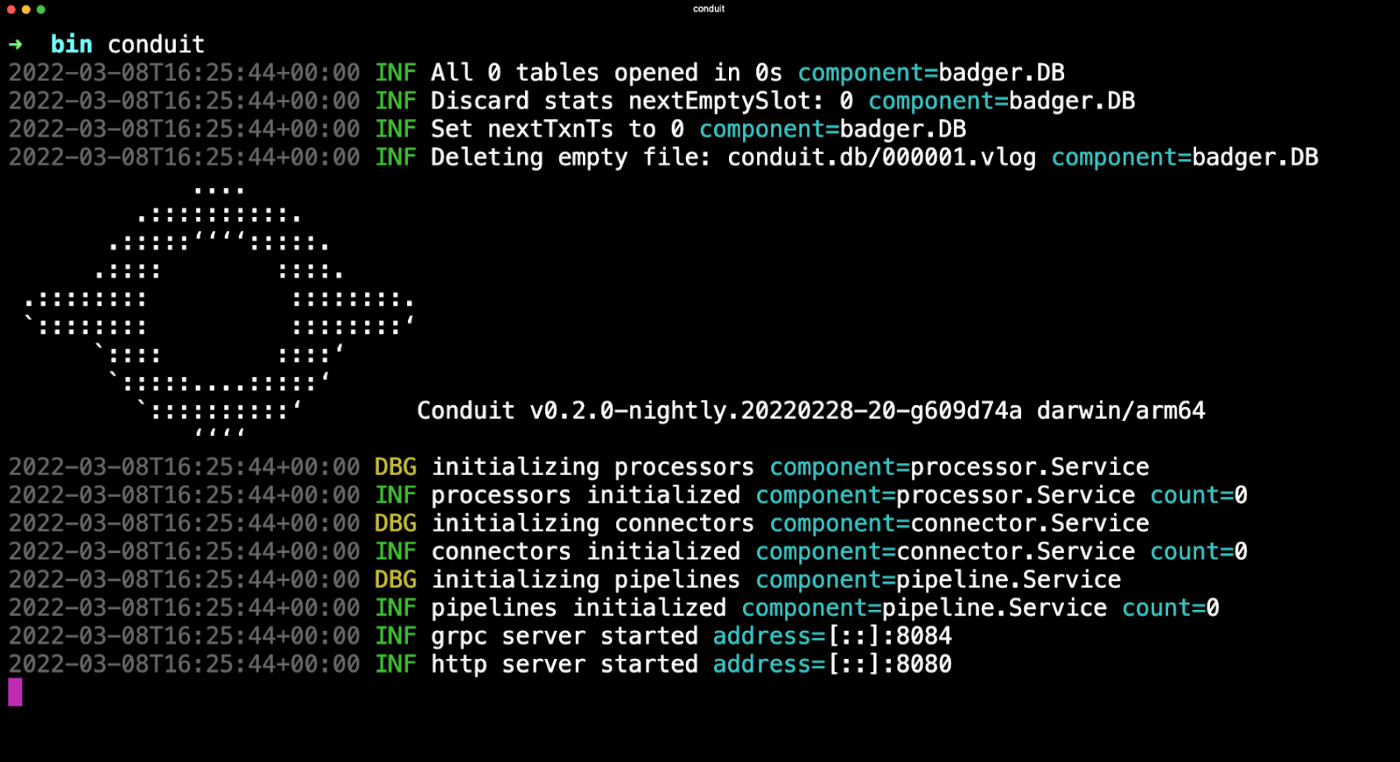

First, Start Conduit to get the REST API Server up and running:

In the above graphic, you can see the HTTP server by default runs on port 8080 and the gRPC server runs on port 8084.

Next, we can now use any generic HTTP client from any language to interact with the Conduit API. Here is an example using the Node.jsAxios HTTP library:

| const axios = require(‘axios’);const POSTGRES_TABLE = ‘my_table’;const POSTGRES_URL = ‘postgres://user:password@host:port/database’;const CONDUIT_HOST = ‘http://localhost:8080';// A function to call the Conduit APIasync function createConnector(config) {try {const pipeline = await axios.post(`${CONDUIT_HOST}/v1/connectors`, config);return pipeline.data;} catch (error) {console.log(error);throw Error(‘Could not create connector’);}}const main = async () => { // Connector Configuration// See more: https://github.com/ConduitIO/conduit-connector-postgres const postgresConfig = {type: ‘TYPE_SOURCE’,plugin: `/pkg/plugins/pg/pg`,pipelineId: pipeline.id,config: {name: ‘pg’,settings: {table: POSTGRES_TABLE,url: POSTGRES_URL,cdc: ‘false’,},},}; const connector = await createConnector(postgresConfig);console.log(pipeline, connector);}main(); |

To dig in deeper, you can download and run a full example from Github.

What’s Next:

I hope this is the foundation for your next big data project. Now it’s your turn to give this example a try for your own use case or try in another programming language.

Here are some guides you can use to dig into Conduit:

Here are some ways you can connect with us:

- Chat with the Conduit team in theDiscord Community

- Request features/ ask questions about Conduit inGitHub Discussions

- Send bug reports toGitHub Issues.

- Check out theConduit Documentation.

- Show us love onTwitter.

I can’t wait to see what you build 🚀